I recently checked out Apache NiFi for the first time to run through a "big data" processing demo. NiFi is an environment for running flow-based data processing programs. Although it is new to the Apache Server Foundation, it lived a previous life as the NSA's "Niagara Files" project. I didn't know the NSA shared, but aparently they do.

NiFi is interesting, different, and surprisingly... fun. Drag and drop a flow-based program! But as with all technologies, understanding the NiFi mindset is more important than a strict analysis of its current capabilities. And the NiFi mindset is both distinctive and challenging. Distinctive in that NiFi emphasizes developing, monitoring, managing, and troubleshooting a running system in production. Challenging in that most current systems emphasize the dev -> test -> prod promotion pattern that NiFi seems to ignore.

Out of the Box

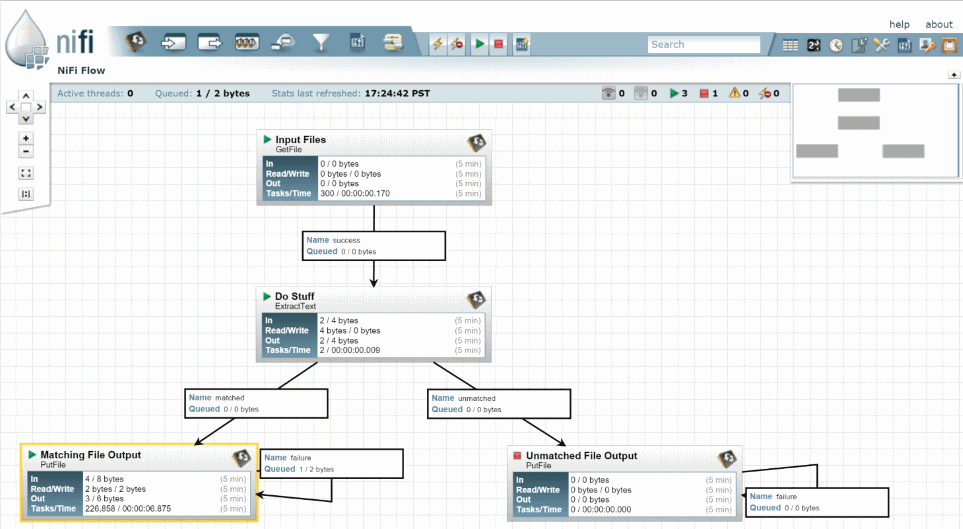

The out-of-the-box experience was very good. NiFi is easy to install as a folder of files, with convenient Linux shell/Windows batch scripts to start it up. The footprint isn't heavy, it can easily run on a laptop for learning and experimentation. Authentication is off by default. That surprised me at first, but I understand better now that I've read some of the security docs. NiFi runs a web server so you can bring up the admin UI in your browser. Most dev tasks can be performed by clicking around the UI, dragging, configuring, and connecting "processors" that work over your data. The UI is also a monitoring tool, so you can see how your running flow is performing, identify problems and bottlenecks, and take immediate action. Very cool.

Smells Like... NSA

Several aspects of NiFi clearly show off its NSA heritage, and I think it's worth experimenting with NiFi to get a sense of it, if for no other reason. NiFi was built to manage a relentless flow of messages or files. It assumes the flow will need to evolve dynamically in production. The designers put a lot of thought into queueing, retries, prioritization, expiration, and throughput optimization. The terminology used in NiFi doesn't quite match the buzzwords dripping off contemporary apps. NiFi has a "provenance" system for tracking data through the flow. There is "backpressure" for managing incoming vs. outgoing flow rates, which again suggests considerable experience with high volumes. Security is unsurprisingly strong, and based on certificates by default. I absolutely enjoyed imagining how my phone and email records might be routed through such a system.

Mindset Gap

NiFi's NSA heritage also leaves some mindset gap that will challenge a lot of private sector adopters, especially in development process and security.

NiFi Process

NiFi has no built-in concept for creating a new flow program, or saving your flow program. There is only THE flow program. The UI provides tools to start, stop, modify, and monitor it. But that's your flow.

I really like the live production flow concept, it resonates with my experience in operations roles overseeing production systems. As an ops guy, I've often wished that monitoring and production-readiness was built in, and with NiFi it pretty much is. But NiFi accomplishes its production-ness by combining the live data with the flow definition (think "code"). This prevents NiFi from providing an obvious dev -> test -> production cycle as we have become used to with code. It is a fascinating design decision, and it's going to drive companies crazy.

While nobody will argue against testing, testing isn't a substitute for monitoring and managing what is actually happening in production. And yet, many engineering teams shortchange production monitoring and management in favor of testing, confident that any code passing their tests may be thrown over the wall to ops without concern. In contrast, NiFi embraces the believe that the production system is the only system, preferring to manage changes in place. But that means you can't really throw code over a wall, even if you want to, because there is a whole running system with data mid-process.

This isn't 100% true, of course, NiFi has mechanisms to import templated flow artifacts that were prepared elsewhere. And processor components are Java code built, tested, and deployed much like any other code. But the absence of an obvious dev -> test -> prod deployment pattern will confound the prejudices of most engineering teams, who will want to -- NEED to -- map their existing process models onto NiFi. Figuring this out is going to be a big challenge.

NiFi Security

NiFi's recommended security model is based on SSL certificates on client and server. No username/password. Security based on certificates is strong, but it is not lightweight, and small or temporary organizations will not want to have to manage certificates for security. I believe the reason authentication is turned off by default is that certificates are too challenging a subject for the 100-level evaluator.

NiFi does aparently have an extensible authentication and authorization mechanism, but certificates are either the only

scheme currently implemented or the only one documented.

I understand LDAP integration is coming out soon, which makes sense, but I predict there will be a lot more work done

to dumb down security, er, I mean make it more accessible to a wide range of users.

Compatibility with AWS

My apps run in AWS, so part of my evaluation was figuring out how hard it would be to integrate NiFi with AWS infrastructure. Good news, current versions of NiFi ship with processor components that work with S3, SQS, and SNS. Bad news, the AWS security story isn't quite right in NiFi, it requires user API keys to be configured on each processor rather than using the instance role or shared profiles.

Also, there are many other AWS services that might be supported, especially Lambda. NiFi is open source, after all, so the determined AWS and NiFi user might get on that instead of complaining about it.

Net Impression

NiFi is interesting and well worth checking out, it certainly left a very positive impression on me. It seems surprisingly easy to get NiFi to start doing useful work immediately. But there is a lot more to learn here, starting with how to integrate it into my development, deployment, and monitoring practices.